Raft is a simple way to keep your distributed system consistent, even if some servers fail. It works by electing a leader who manages all updates and guarantees every server has the same data. When a command is received, the leader replicates it across followers, only confirming once everyone has it. This approach makes building reliable apps easier by keeping everyone synchronized. If you want to understand how it all fits together, there’s more to uncover.

Key Takeaways

- Raft ensures all servers agree on data even during failures, maintaining system consistency.

- The leader manages log entries, replicates them to followers, and coordinates commands from clients.

- Leader election happens automatically when the current leader fails, ensuring continuous system operation.

- Raft is designed to be simple, making implementation and troubleshooting easier for developers.

- It provides fault-tolerance, so systems stay reliable despite server crashes or network issues.

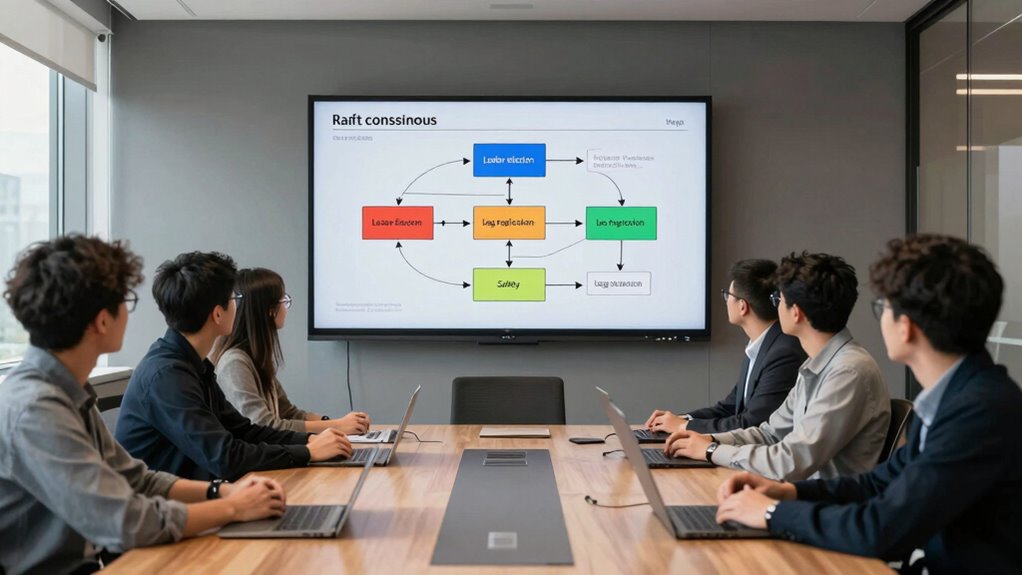

Understanding the Raft consensus algorithm is crucial for developers building reliable distributed systems. Raft helps your system stay consistent even when network failures or server crashes happen. At its core, Raft manages how multiple servers agree on the same state, making sure every node has the same data. This process involves key concepts like leader election and log replication, which work together to keep your system running smoothly.

When you start a Raft-based system, the first thing that happens is leader election. Imagine a group of servers where one needs to act as the coordinator—this is your leader. During leader election, servers communicate with each other to choose the most suitable node to lead. This process guarantees that only one server acts as the leader at any time, avoiding conflicts. If the current leader crashes or becomes unresponsive, the remaining servers hold a new election quickly, so your system remains available and consistent. This election process is crucial because it guarantees there’s always a clear leader guiding the system’s operations.

Once a leader is elected, it takes charge of log replication. You can think of the log as a sequential record of all the actions or commands in your system. The leader receives new commands from clients, then appends these commands to its log. It then replicates these log entries to follower nodes, ensuring all servers have identical logs. This replication process is essential for maintaining consistency across your distributed system. As the leader confirms that followers have successfully stored the log entries, it commits these entries, applying the changes to the system’s state. This way, every server processes the same commands in the same order, preventing divergence or inconsistencies. Additionally, the Raft algorithm emphasizes the importance of fault-tolerance, allowing your system to recover from server failures seamlessly. Moreover, the election process is designed to be efficient and quick, minimizing downtime and maintaining system responsiveness. Understanding the underlying mechanism of how consensus is achieved helps developers troubleshoot issues more effectively and optimize system performance. Recognizing how natural language can facilitate clearer communication among team members can further improve your implementation process. Knowing how to handle network partitions and leader re-elections further enhances your system’s robustness.

Throughout this process, the leader plays a central role in coordinating operations. It manages the flow of data, ensures logs are synchronized, and handles elections when necessary. Your system benefits from Raft’s straightforward approach because it simplifies complex consensus problems into understandable steps, making it easier to implement and troubleshoot. By understanding leader election and log replication, you gain insight into how Raft guarantees fault-tolerance and consistency. This knowledge empowers you to build distributed applications that are both reliable and resilient, even in unpredictable network environments. Fundamentally, Raft’s design provides a clear, practical framework for maintaining harmony across your servers, ensuring your system stays accurate and available no matter what challenges arise.

Practice Exams – Water Distribution Operator Certification: Grades 1 and 2

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Frequently Asked Questions

How Does Raft Handle Network Partitions?

When a network split occurs, Raft handles it by electing a new leader if the current one becomes unreachable, guaranteeing the system remains consistent. During partition recovery, Raft uses leader election and log replication to synchronize data between nodes. You can trust Raft to maintain data integrity through these processes, even amid network splits, because it prevents split-brain scenarios and guarantees only one leader manages consensus at a time.

What Are Common Pitfalls When Implementing Raft?

You might find yourself sidestepping common pitfalls like neglecting heartbeat optimization or delaying log compaction. Failing to tune heartbeat intervals can cause unnecessary election triggers, while ignoring timely log compaction may lead to bloated logs and degraded performance. To avoid these issues, guarantee your implementation balances heartbeat frequency and log cleanup. Regularly review these aspects to maintain smooth consensus, preventing subtle bugs that could disrupt your system’s consistency and reliability.

How Does Leader Election Work in Raft?

During the election process, leader election occurs when followers timeout and initiate a new election. You’ll see candidates send RequestVote messages to other nodes, asking for their votes. If a candidate secures a majority, it becomes the leader. If no one wins, the election process repeats. This guarantees a new leader is chosen reliably, maintaining consensus and coordination across the cluster even if the current leader fails.

Can Raft Be Used in Multi-Data Center Setups?

Raft can absolutely be used in multi-data center setups, and it’s like the backbone of data consistency across locations. You’ll benefit from data center redundancy and cross-region replication, ensuring your system stays available even if one data center fails. Raft’s leader election and log replication make it reliable for maintaining consistency across geographically dispersed data centers, though you’ll need to tweak configurations for latency and network partitions.

What Are Performance Considerations for Large Clusters?

In large clusters, you should watch out for leader stability issues that can slow down consensus. As you scale your cluster, network latency increases, leading to longer election times and slower replication. Guarantee your leadership remains stable by monitoring election durations and optimizing network configurations. Scaling efficiently involves balancing the number of nodes and maintaining quick communication, so the cluster stays responsive and resilient, even as it grows larger.

The Shanty Book – Part 1 (Lyric Legacy Historic Edition): A Classic Collection of Sailor Sea Songs, Chanteys, and Work Tunes

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

Now that you’ve navigated the seas of Raft consensus, you’re better equipped to chart a course through distributed systems. Think of Raft as your trusty lighthouse, guiding your application safely through storms of data inconsistency and network turbulence. With this knowledge, you can build resilient, synchronized apps that stand tall amid chaos. Embrace the journey, and let Raft be your compass in the vast ocean of distributed computing, steering you toward reliable, harmonious systems.

Fault-Tolerant Distributed Computing (Lecture Notes in Computer Science, 448)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

![Express Schedule Free Employee Scheduling Software [PC/Mac Download]](https://m.media-amazon.com/images/I/41yvuCFIVfS._SL500_.jpg)

Express Schedule Free Employee Scheduling Software [PC/Mac Download]

Simple shift planning via an easy drag & drop interface

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.